interesting data protection/tech stuff lately

I've been on vacation for a while and just now returned home. Still gotta reply to some e-mails, sorry! But also: Got a huge backlog of interesting data protection stuff to catch up on, and why not write about the most interesting things?

When you talk to people about data protection and privacy, what they are usually thinking of are social media, ads, and agreeing to share information with 1557 partners in a cookie banner. It's all about the evil big guys. Child surveillance is less in the spotlight, and what's often forgotten is how supposedly "normal" school tech can put child and parent in danger.

The need for children to have phones or tablets for school and have an account with several apps with more or less tracking and cloud services interferes with the need for privacy that people leaving abusive situations need. Lots of data harvesting is enabled by default (forbidden in the EEA, but nonetheless ignored sometimes, and still an issue elsewhere), which includes information about location. Sometimes, these permissions are also given before leaving the abusive situation, but something they forget to disable later, or miss some of the settings.

Think of when your phone asked you to automatically upload images to the cloud, or to always tag location with them; you might have said no. Many people enjoy being able to filter based on location, love the meta data, and have their Photo app automatically make a little slideshow of the vacation. They want them saved on the cloud, and have access from anywhere.

Unfortunately, that also means any abuser with access to the account gets a live feed of where the person is with each cloud upload. Months or years after setting that up, when everything was still okay, that is something you can totally overlook to disable. Even if you realize, it might be confusing to shut off.

That is why education in data protection, privacy and how to use your tech is so important, especially for people suffering from abuse and stalking. Netzpolitik [DE] had a great article on this. Reading it gave me new appreciation for when my phone prompts me to reevaluate my privacy settings, saying "[App] has had access to all photos. Do you want to keep that?"

Coming home from vacation, I immediately had to rush to my computer to make it in time for a workshop by Epicenter.Works that I signed up for a while ago, which was about their prototype for their upcoming service Whoidentifies.me (demo).

The core idea of the project is providing an accessible way for the public (esp. citizens, NGOs, worker unions, consumer rights groups) to check who (companies, public authorities) accesses which data within the emerging eIDAS ecosystem, which involves the upcoming European Digital Identity Wallets (or also shortened to EUDI Wallet) and Identification. That's a whole topic for a dedicated blog post some time about OS age verification, the apps launching for that, etc. so I won't get into that now.

Basically, different databases and public information are crawled and combined to give an accurate view of who makes use of the eIDAS system so people can make informed decisions about who gets their data. Ideally, you'll be able to filter companies by business type, use case and queried attributes in order to enable risk assessments and recognize misuse at an early stage.

In the workshop, I asked if it is comparable to Haveibeenpwned.com, in the sense that I can enter some specific info and can see who gets that data, but that is not possible and out of the scope; it really is only for researching companies and public institutions and what their role exactly is when accessing the digital identification in general. Here's a demo page for Deutsche Bank - the information is not real and just a placeholder, but this is the information they are hoping to show and collect when the project launches.

For more information on privacy in digital public infrastructures (like digital identity, digital payment and data exchange systems developed or operated by or on behalf of a government), here are some course materials by Epicenter.Works on the topic.

More about massive flows of data: The ARD-Mediathek has a great documentary called Gefährliche Apps (dangerous apps). It shows how easy it is to access truly massive amounts of extremely precise smartphone location data that is not anonymous - the people in the documentary received this data as a free sample. The IDs included with each location can be filtered, which means you can see all locations for a specific ID. Tracked over a period of time, seeing exactly where people live, work, go to school and otherwise spend their time, down to exact rooms in buildings. This makes identification easy and lets you build a profile on them and their daily routine.

This data is collected by mundane apps like weather apps, dating apps, fitness apps and games, and then sold to their 800+ partners. Unfortunately, this can have dire consequences not many are aware of or think are truly possible (because the bad shit always happens elsewhere, but never to them).

This location data has been used to target people in war, harass and intimidate journalists and dissidents who have fled to other countries, and can expose the daily paths of vulnerable officials. It can be used to stalk you, to find out where you walk your dog or when your apartment is empty. It can be used against you when you criticize Russia or Israel. It is trivial for any individual to buy data based on a location they know you frequent and then go from there.

Additionally, we have seen lately that the techno-feudalists in the US are becoming increasingly thin-skinned about anyone attacking their revenue streams or criticizing their fascist moves in public, down to the incident of Karim Khan losing his Microsoft access, and OpenAI and Palantir increasingly going after journalists and NGOs publicly exposing their unethical behavior and politics. These are companies worth billions of dollars, with massive data streams and political power, whose products are implemented everywhere at the moment, with especially Palantir used for public surveillance.

With each step going outside, you are potentially not only building a profile with your phone location data together with a full social media profile, but public cameras will be, or are already, using you just existing in public for AI training and facial recognition. Together with laws in Germany proposing using internet image and video material to identify you (more further down below), we are quickly heading towards a point of total surveillance.

One mentioned company was Datarade; feel free to look around on the site to see how much is truly collected and offered. For example, here's one for global mobile location data, 70B+ daily signals, or this one for 250B real time daily events of location/foot traffic.

How reliant is your workplace on Microsoft? In my case, if Microsoft stopped doing business with European governments or companies, I would be unable to do any work at all. We are fully reliant on the entire Office365 portfolio and more. I'd be unable to log in, to receive or write emails, or have access to any of the databases I need. This is scary, especially with the current US administration. We make ourselves insanely vulnerable.

The Future of Technology Institute (FOTI, a pan-European think tank) published a paper called Cloud Defense - An exposed European flank about Europe's dependency on US Cloud services, especially critical national security functions. It's actually nuts - handing another country, especially one like the US, the keys to such sensitive and important things. The risk of a kill switch and subsequent loss of data, loss of work ability and the data being used against us is maddening. The EU needs to be more sovereign and its tech stack needs to withstand geopolitical tensions and shifts.

Unfortunately, US tech companies have reacted with promises and options of a sovereign cloud or supposed data silos whose data never flows to the US, but those are meaningless and hard to actually enforce or control and the US CLOUD Act still applies. Our main path forward is leaving and excluding hyperscaler platforms that remain exposed to foreign jurisdiction and geostrategic leverage.

Speaking of hyperscalers: Proton was writing about Microsoft 365 Copilot flex routing, which undermines GDPR compliance and the above promises of a an air-gapped sovereign cloud or data center. On April 17, 2026, Microsoft has started sending Copilot data to foreign servers for processing whenever European data center capacity reaches its limit - which means it may actually happen in the US, Canada, or Australia. This happens by default unless you opt out. This needs to either be opted out of or included in your organization's Microsoft DPIA.

Moving on to a topic adjacent to privacy: The European Commission published a paper about the impact of digital technologies in European democracy, especially the so-called attention economy, which prioritizes engagement over accuracy and therefore highlights negative and dangerous content the most. It creates fractured perceived realities in which the goal is no longer to (just) make individuals believe false claims, but instead to distract and generate distrust. The information flow online overloads us cognitively, which makes it harder and harder to discern agendas. They also point out the “fantasy-industrial complex” that involves interactions between politicians, corporate actors, platforms, legacy media, influencers, and citizens to create fabricated versions of reality which are hard to reconcile.

This is especially big in the AI age nowadays, as anything not fitting into a particular worldview can be dismissed as being generated by AI, and a lot of propaganda is indeed generated with it. The ones embedded in the platforms give users the "illusion of knowledge" due to the fluent language and realistic visual output, which makes it seem as if informational gaps have been bridged, when they actually haven't been. According to the report, this creates the conditions for a distinct new informational regime which is termed epistemia; it lowers the threshold of responsibility in content creation (“the AI said it, not me”) and may create a false sense of competence in users.

The problem is: With no trustworthy information, it's hard to hold governments and individuals accountable.

Estimates of misinformation exposure of news content consumed online are around 1%-10%, with prevalence increasing to 10%-30% for content involving contentious topics like climate, health, or wars.

Regardless, the people behind the paper see a huge potential for social media and the internet in general to actually help foster community and free expression and democratic participation, which just has to actually be enabled by the correct incentives and design, instead of rewarding harmful effects.

The publication recommends

- creating alternative public spaces that do not depend on the attention economy; also in the physical world, not just online

- reinforcement of crowd-sourced knowledge (good examples are Wikipedia, Community Notes, etc.) and better fact-checking

- regulations for more user agency (improving platform design from behavioral science insights like cool-offs or accuracy prompts, awareness campaigns and media literacy classes)

- demonetizing disinformation actors, and changing the business model of the platforms to no longer revolve around attention

- less polarizing algorithms, more decentralized platforms, more EU sovereignty.

I can only recommend reading the full paper if you have time! It's easier to read than you might think, has pretty graphics, parts have been highlighted and there are bullet point sections for better legibility :)

other news, quickly

The Datenschutzkonferenz (DSK) of Germany published a press statement about three controversial legislative initiatives by the German government which significantly increase the digital surveillance and data collection for criminal investigations and crime prevention. This would enable police to use biometric data from internet sources (your selfies, your videos and voice uploads, etc.) together with large datasets (e.g., police records, seized devices, telecom data, internet data) to identify people and analyze them using automated systems to generate new intelligence. This means AI could be used to identify patterns, relationships, and risks automatically. The risks are obviously mass surveillance, false positives, lack of transparency and disproportionate intrusion on our rights based on very vague "prevention" promises. The DSK is against this blanket allowance and wants strict limitations and clear legal thresholds so the use is only in exceptional, well-defined cases with a data scope limit and exclusion of AI where not controllable.

Related: The new police law in Northrhine-Westphalia, Germany, that enables the use of Palantir is being accused of being unconstitutional.

The European Data Protection Board (EDPB) released their Annual Report for 2025. Mostly about the Helsinki Statement/Initiative, balancing regulatory simplification with fundamental rights protection, cross-regulatory cooperation (DSA, DMA, AI Act), harmonizing national and EDPB guidance, and practical compliance support. Biggest fine: Ireland with €530M due to TikTok data transfers to China.

Related: They also published guidelines for processing personal data for scientific research purposes. This is especially interesting to me because I work with health data and I am very familiar with the recent EU push for RWD (real world data), more data collection from health insurance companies, and efforts like DARWIN EU.

More and more AI-powered military tech, as written about by Correctiv [DE]. Munich has become a hotspot for the development of Precision Mass Warfare and optimizing the Kill Chain, especially due to the company Helsing. The entire topic is difficult to discuss: What data is used for the training and what is collected in the actual war situation? Who is responsible when innocent people are killed due to a bot mistake? Is this better than letting civilians go to war for their rich governments and getting traumatized? If other countries are arming up on new war tech, would it be irresponsible for you to not participate?

Digitalrechte.de [DE]: The European Commission decided that the Digital Service Act (DSA) should also apply to ChatGPT, as it can be used as a Very Large Online Search Engine (VLOSE) as well. This means the risk minimization measures and analyses, transparency reports, data access for research, external audits, reporting of illegal content and more need to be implemented.

AlgorithmWatch is looking at how LLM use can alter the decision making of politicians in important positions. What can happen if a politician relies on an AI summary of a very complex policy area? The picture that is being painted so far is a confusing one: Politicians seem to be using it, but don't actually want to be nailed down publicly to admitting to doing it, and don't want to share (some of) the prompts.

The Federal Ministry for Environment, Climate Action and Nature Germany (BMUKN) released a recommendation for sustainable AI. They claim environmentally sustainable AI and economic competitiveness are not in tension, but can mutually reinforce each other. They focus on the specialized niche use cases of AI developed by small and medium-sized enterprises for predictive maintenance, quality control, machinery control and process optimization, so this is a little removed from the whole US-based huge data centers to facilitate sex roleplay with huge reasoning GPAI. Therefore, they conclude those use less energy, require less hardware, can be used more locally and provide more digital sovereignty. They suggest standardized, publicly accessible, independently audited environmental reporting frameworks for AI's computing-related impacts, funding to reduce climate impact and research ways to use less electricity and water, and dedicated "green models" or green modes.

BayLDA (the Data Protection Authority for Bavaria, Germany) has published a recommendation/handbook for AI use in Bavaria's public administration. I'm not in Bavaria, but still useful to see and implement in practice elsewhere in Germany when working in the public sector. At work, I am moving to fully solidify the role as a data protection coordinator and my boss involves me in some AI projects as well, and this is something I will be referencing.

bonus

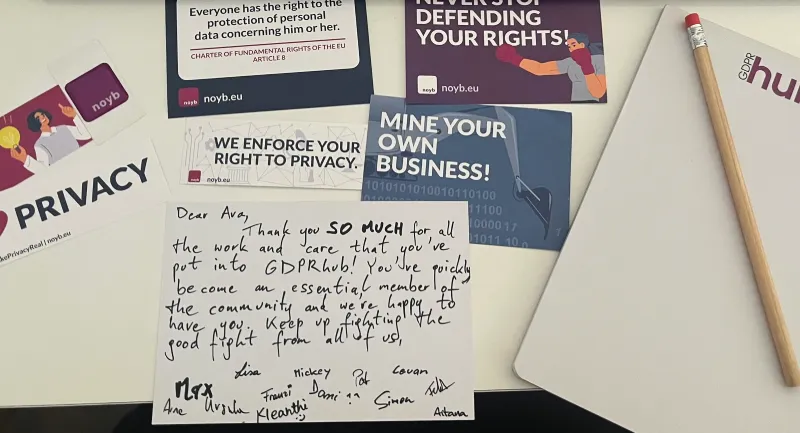

Got my Gold Member package from noyb, which included a sweet card.

Reply via email

Published